Media Summary: In this video, I have explained in detail the In this workshop, Lewis Tunstall and Edward Beeching from Hugging Face will discuss a powerful Hii, Today we are reviewing the paper called RLHF - Reinforcement Learning From Human Feedback. It is one of the pioneering ...

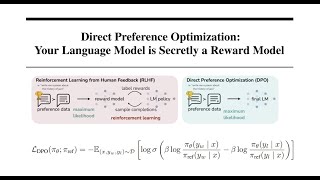

Dpo Coding Direct Preference Optimization Dpo Code Implementation Dpo In Llm Alignment - Detailed Analysis & Overview

In this video, I have explained in detail the In this workshop, Lewis Tunstall and Edward Beeching from Hugging Face will discuss a powerful Hii, Today we are reviewing the paper called RLHF - Reinforcement Learning From Human Feedback. It is one of the pioneering ... While large-scale unsupervised language models (LMs) learn broad world knowledge and some reasoning skills, achieving ...