Media Summary: Don't like the Sound Effect?:* *LLM Training Playlist:* ... ... Stanford CS234 Reinforcement Learning I Offline RL 2 and Guest Lecture on In this workshop, Lewis Tunstall and Edward Beeching from Hugging Face will discuss a powerful alignment technique called ...

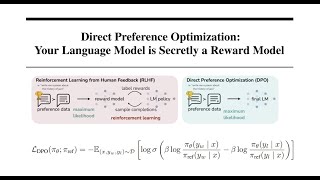

Direct Preference Optimization Dpo Paper Explained - Detailed Analysis & Overview

Don't like the Sound Effect?:* *LLM Training Playlist:* ... ... Stanford CS234 Reinforcement Learning I Offline RL 2 and Guest Lecture on In this workshop, Lewis Tunstall and Edward Beeching from Hugging Face will discuss a powerful alignment technique called ... AIResearch The video lecture discusses and explains the derivation of ...