Media Summary: In this workshop, Lewis Tunstall and Edward Beeching from Hugging Face will discuss a powerful alignment technique called ... While large-scale unsupervised language models (LMs) learn broad world knowledge and some reasoning skills, achieving ... ... Stanford CS234 Reinforcement Learning I Offline RL 2 and Guest Lecture on

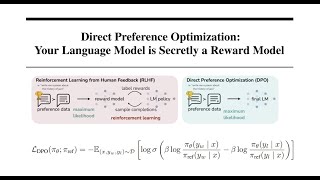

Direct Preference Optimization - Detailed Analysis & Overview

In this workshop, Lewis Tunstall and Edward Beeching from Hugging Face will discuss a powerful alignment technique called ... While large-scale unsupervised language models (LMs) learn broad world knowledge and some reasoning skills, achieving ... ... Stanford CS234 Reinforcement Learning I Offline RL 2 and Guest Lecture on Don't like the Sound Effect?:* *LLM Training Playlist:* ... Get the two skills Claude is missing: Want your team using Claude? Learn how Reinforcement Learning from Human Feedback (RLHF) actually works and why

Get the Dataset: Get the DPO Script + Dataset: ... ... 2025 This guest lecture covers RL for LLMs: In this video, I break down DeepSeek's Group Relative Policy DPO: A comparison of traditional human-preference alignment against