Media Summary: This video will teach you everything there is to know about the WordPiece algorithm for Welcome to Zero to Hero for Natural Language Processing using TensorFlow! If you're not an expert on AI or ML, don't worry ... This video is part of the Hugging Face course: Related videos : -

Word Based Tokenizers - Detailed Analysis & Overview

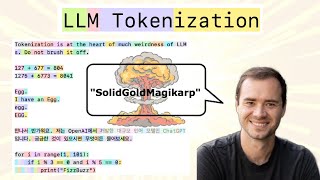

This video will teach you everything there is to know about the WordPiece algorithm for Welcome to Zero to Hero for Natural Language Processing using TensorFlow! If you're not an expert on AI or ML, don't worry ... This video is part of the Hugging Face course: Related videos : - Tokens and embeddings are essential concepts to large language models (LLMs), and they both represent Most devs are using LLMs daily but don't have a clue about some of the fundamentals. Understanding tokens is crucial because ... Myself Shridhar Mankar an Engineer l YouTuber l Educational Blogger l Educator l Podcaster. My Aim- To Make Engineering ...

Want to play with the technology yourself? Explore our interactive demo → Learn more about the ...