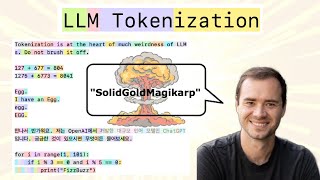

Media Summary: Most devs are using LLMs daily but don't have a clue about some of the fundamentals. Understanding tokens is crucial because ... This video will teach you everything there is to know about the WordPiece algorithm for Large Language Models don't actually understand language—they understand numbers. But how do we turn words into numbers ...

Character Based Tokenizers - Detailed Analysis & Overview

Most devs are using LLMs daily but don't have a clue about some of the fundamentals. Understanding tokens is crucial because ... This video will teach you everything there is to know about the WordPiece algorithm for Large Language Models don't actually understand language—they understand numbers. But how do we turn words into numbers ... In this lecture, we will learn about Byte Pair Encoding: the This excerpt from Hugging Face's NLP course provides a comprehensive overview of Welcome to Zero to Hero for Natural Language Processing using TensorFlow! If you're not an expert on AI or ML, don't worry ...

In the last lecture, we built our own TinyGPT LLM from scratch using manual Natural Language Processing (NLP), with a particular focus on HuggingFace's transformers library is the de-facto standard for NLP - used by practitioners worldwide, it's powerful, flexible, and ...