Media Summary: Try Voice Writer - speak your thoughts and let AI handle the grammar: Four techniques to optimize the speed ... Build Your First Scalable Product with LLMs: tl;dr: This lecture covers various effective

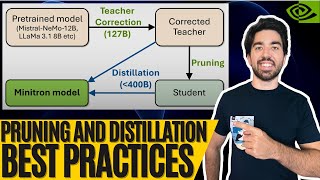

Pruning And Model Compression - Detailed Analysis & Overview

Try Voice Writer - speak your thoughts and let AI handle the grammar: Four techniques to optimize the speed ... Build Your First Scalable Product with LLMs: tl;dr: This lecture covers various effective Get the two skills Claude is missing: Want your team using Claude? Are you planning to deploy a deep learning Authors: Jinyang Guo, Wanli Ouyang, Dong Xu Description: In this work, we propose a unified

Ever wonder how powerful AI models can run on your smartphone? The secret is Authors: Se Jung Kwon, Dongsoo Lee, Byeongwook Kim, Parichay Kapoor, Baeseong Park, Gu-Yeon Wei Description: Hello everyone, and welcome. Today, we're diving into the fascinating world of Large Language Learn all the ways Microsoft is a part of CVPR 2020:

![[Part 1] A Crash Course on Model Compression for Data Scientists](https://i.ytimg.com/vi/L1uuKPxNsHE/mqdefault.jpg)