Media Summary: Try Voice Writer - speak your thoughts and let AI handle the grammar: Four techniques to optimize the speed ... Ready to become a certified watsonx AI Assistant Engineer? Register now and use code IBMTechYT20 for 20% off of your exam ... Are you planning to deploy a deep learning

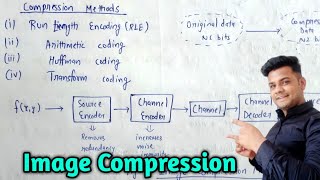

Model Compression - Detailed Analysis & Overview

Try Voice Writer - speak your thoughts and let AI handle the grammar: Four techniques to optimize the speed ... Ready to become a certified watsonx AI Assistant Engineer? Register now and use code IBMTechYT20 for 20% off of your exam ... Are you planning to deploy a deep learning Ever wonder how powerful AI models can run on your smartphone? The secret is Learn how model quantization and distillation—two key techniques for large tl;dr: This lecture covers various effective

Get the two skills Claude is missing: Want your team using Claude? This is a recorded presentation of one of the contributed talks in the poster session at ARCS 2022 with the following details: Let's actually learn something practical we can apply instead of listening to the same repackaged information. I'm here for you ... DIP ➖➖➖➖➖➖➖➖➖➖➖➖➖➖ GET COMPLETE NOTES PDF ... This explainer is about how corporations misuse int8 and In this week's AI news roundup, we bring you the latest updates on two major developments: Mark Zuckerberg's exciting new ...

![[Part 1] A Crash Course on Model Compression for Data Scientists](https://i.ytimg.com/vi/L1uuKPxNsHE/mqdefault.jpg)