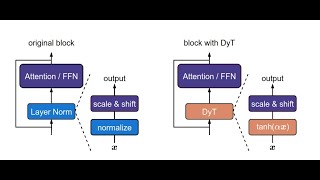

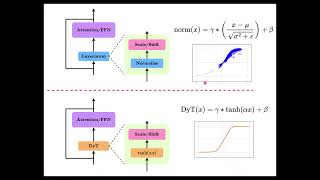

Media Summary: Chapters 00:00 - 03:45 Introduction 03:45 - 16:06 Methodology 16:06 - 21:25 Results 21:25 - 39:46 Analysis 39:46 - 43:56 ... LayerNorm is outdated? Let's find it out together. This video presents a summary of the CVPR 2025

Paper Presentation 4 Transformers Without Normalization - Detailed Analysis & Overview

Chapters 00:00 - 03:45 Introduction 03:45 - 16:06 Methodology 16:06 - 21:25 Results 21:25 - 39:46 Analysis 39:46 - 43:56 ... LayerNorm is outdated? Let's find it out together. This video presents a summary of the CVPR 2025 Transformers Without Normalization: The Dynamic Tanh Paradigm As a regular normal SWE, want to share several key topics to better understand NFNet and NFResNet: High-Performance Large-Scale Image Recognition

We just wrapped up our second Genloop Research Jam where we explored Meta's