Media Summary: Part of An Introduction to Programming with SYCL on Perlmutter and Beyond on March 1, 2022. Slides and more details are at ... Follow along with Unit 9 in a Lightning AI Studio, an online reproducible environment created by Sebastian Raschka, that ... Part 2 of 5 in the “5 Essential LLM Optimization Techiniques” series. Link to the 5 techiniques roadmap: ...

3 Data Parallelism - Detailed Analysis & Overview

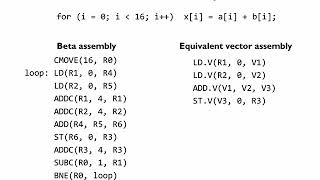

Part of An Introduction to Programming with SYCL on Perlmutter and Beyond on March 1, 2022. Slides and more details are at ... Follow along with Unit 9 in a Lightning AI Studio, an online reproducible environment created by Sebastian Raschka, that ... Part 2 of 5 in the “5 Essential LLM Optimization Techiniques” series. Link to the 5 techiniques roadmap: ... For more information about Stanford's online Artificial Intelligence programs visit: To learn more about ... ... 6:22 - Matrix Multiplication 8:37 - Motivation for Parallelism 9:55 - Review of Basic Training Loop 11:05 - MIT 6.004 Computation Structures, Spring 2017 Instructor: Chris Terman View the complete course:

... deal with this is called model parallelism and with lots of data the way we deal with this is called In the second video of this series, Suraj Subramanian gently introduces you to what is happening under the hood when you train a ... On this channel you will find video realted to course (see channel's playlist) Learn & enjoy and Don't forget to subscribe ! check ...

![Parallel Processing, Scaling, and Data Parallelism. Course [03]](https://i.ytimg.com/vi/uaw1binEuKY/mqdefault.jpg)