Media Summary: In the second video of this series, Suraj Subramanian gently introduces you to what is happening under the hood when you train a ... In the first video of this series, Suraj Subramanian breaks down why Training a 7B, 7-B, or even 500B parameter model on a single GPU? Impossible. In this step-by-step guide you'll learn how to ...

How Ddp Works Distributed Data Parallel Quick Explained - Detailed Analysis & Overview

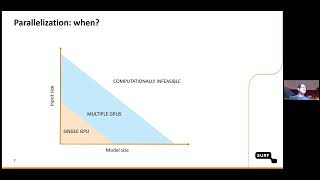

In the second video of this series, Suraj Subramanian gently introduces you to what is happening under the hood when you train a ... In the first video of this series, Suraj Subramanian breaks down why Training a 7B, 7-B, or even 500B parameter model on a single GPU? Impossible. In this step-by-step guide you'll learn how to ... In this video, we give a short intro to Lightning's flag 'replace_sample_ddp.' To learn more about Lightning, please visit the official ... This NVIDIA-led training focuses on scaling GPU workloads with PyTorch In this talk, software engineer Pritam Damania covers several improvements in PyTorch

This video goes over how to perform multi node Get Life-time Access to the complete scripts (and future improvements): Ever wondered how massive AI models like GPT are actually trained?While everyone's talking about ChatGPT, Claude, and ... In the fourth video of this series, Suraj Subramanian walks through all the code required to implement fault-tolerance in As datasets and models grow in complexity, mastering In the third video of this series, Suraj Subramanian walks through the code required to implement

In the fifth video of this series, Suraj Subramanian walks through the code required to launch your training job across multiple ...