Media Summary: High Accuracy and High Fidelity Extraction of DeepHammer: Depleting the Intelligence of DEEPVSA: Facilitating Value-set Analysis with

Usenix Security 20 Interpretable Deep Learning Under Fire - Detailed Analysis & Overview

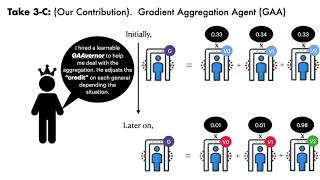

High Accuracy and High Fidelity Extraction of DeepHammer: Depleting the Intelligence of DEEPVSA: Facilitating Value-set Analysis with Adversarial Preprocessing: Understanding and Preventing Image-Scaling Attacks in Hybrid Batch Attacks: Finding Black-box Adversarial Examples with Limited Queries Fnu Suya, Jianfeng Chi, David Evans, and ... Justinian's GAAvernor: Robust Distributed

Shiqi Wang Columbia University Abstract: Due to the increasing deployment of