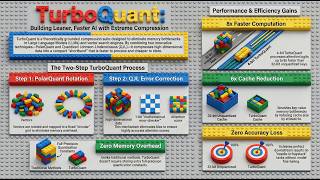

Media Summary: Long-context AI gets expensive fast, and one of the biggest reasons is Google just quietly dropped something massive — and the memory chip market already felt it. As AI context windows expand to process entire codebases and massive documents, the Key-Value (

The Kv Cache Hack That Saved My Gpu Turboquant Explained - Detailed Analysis & Overview

Long-context AI gets expensive fast, and one of the biggest reasons is Google just quietly dropped something massive — and the memory chip market already felt it. As AI context windows expand to process entire codebases and massive documents, the Key-Value ( Is the "Memory Wall" finally crumbling? In this video, we dive deep into ** In this video, we break down AI's biggest bottleneck: the Key-Value ( Link to our newsletter: Google just dropped something that could completely change how AI systems run ...