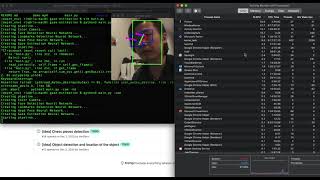

Media Summary: CVPR 2025 presentation of our paper "Enhancing 3D i used the Inference Engine API from Intel's Open Vino Tool-Kit to build this project. Hello everyone my name is gabriel de funzviera and today i'll be presenting the paper titled

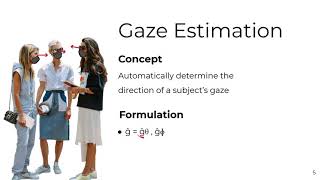

The Gaze Estimation Model - Detailed Analysis & Overview

CVPR 2025 presentation of our paper "Enhancing 3D i used the Inference Engine API from Intel's Open Vino Tool-Kit to build this project. Hello everyone my name is gabriel de funzviera and today i'll be presenting the paper titled Paper title: Learning-by-Novel-View-Synthesis for Full-Face Appearance-Based 3D Gaze estimation via self-attention augmented convolutions (SIBGRAPI'21) It's running much faster now! New updates are coming apparently.

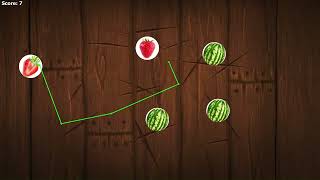

Team Members Sponsor - Dr Abdallah Moubayed Paromita Roy Harshitha Karur Rushikesh Patil Rahul Ghanghas. NVGaze: An Anatomically-Informed Dataset for Low-Latency, Near-Eye At present, intelligent computing applications are widely used in different domains, including retail stores. The analysis of ... In this episode of the AI Research Roundup, host Alex explores a cutting-edge paper on computer vision and human behavior ... This video shows part of the functionality of the OpenGaze software framework. For details please visit www.opengaze.org. Several technical issues that affect eye-tracking have arisen concomitantly with the steadily increasing sizes of personal displays ...

This is a sample video I created by running the CNN-based This video demonstrates work described in our 2018 ECCV paper ...

![[GAZE 2022] Learning-by-Novel-View-Synthesis for Full-Face Appearance-Based 3D Gaze Estimation](https://i.ytimg.com/vi/BUFTzo5DqXc/mqdefault.jpg)