Media Summary: In this video, you will learn how to call cloud function from Google Pyspark job: 1. Create a bucket in gcp console (gsutil mb gs://spark_job) 2. gsutil ls 3. ls 4. clone the repo Automate transfer of data between Data Lake and Data Warehouse on Google

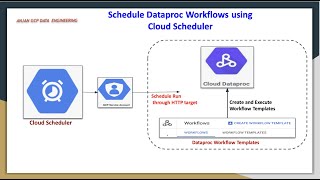

Schedule Dataproc Workflows Using Cloud Scheduler - Detailed Analysis & Overview

In this video, you will learn how to call cloud function from Google Pyspark job: 1. Create a bucket in gcp console (gsutil mb gs://spark_job) 2. gsutil ls 3. ls 4. clone the repo Automate transfer of data between Data Lake and Data Warehouse on Google Are you trying to orchestrate enterprise-grade Data Science and Machine learning workloads ... are other methods to do that so one way you can In This video we have discussed & did a Proof of concept of automation on GCE VM instance. To know more Watch the video till ...

This tutorial shows how to automate Python script execution on GCP In this video, I'll show how I moved my Python scripts to the