Media Summary: Subramanian's talk promises to serve as a cornerstone for anyone interested in the field of machine learning, offering invaluable ... A complete tutorial on how to train a model on multiple GPUs or multiple servers. I first describe the difference between Data ... For more information about Stanford's online Artificial Intelligence programs visit: To learn more about ...

Pytorch Distributed Towards Large Scale Training - Detailed Analysis & Overview

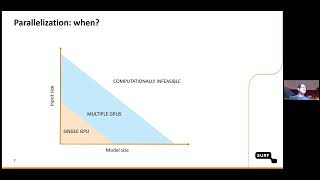

Subramanian's talk promises to serve as a cornerstone for anyone interested in the field of machine learning, offering invaluable ... A complete tutorial on how to train a model on multiple GPUs or multiple servers. I first describe the difference between Data ... For more information about Stanford's online Artificial Intelligence programs visit: To learn more about ... As datasets and models grow in complexity, mastering Ready to move beyond single-GPU limits and master In the second video of this series, Suraj Subramanian gently introduces you to what is happening under the hood when you train a ...

Are you tired of waiting for your deep learning models to train? In this video, we'll show you how to supercharge your Watch Parinita Rahi & Razvan Tanase from Microsoft present their