Media Summary: In Fall 2020 and Spring 2021, this was MIT's 18.337J/6.338J: Parallel Computing and Scientific Machine Learning course. You might not know all of the latest methods in differential equations, all of the best knobs to tweak, how to properly handle ... Lessons learned while achieving a 100x speedup of TrajectoryOptimization.jl by eliminating allocations.

Optimizing Serial Code In Julia 1 Memory Models Mutation And Vectorization - Detailed Analysis & Overview

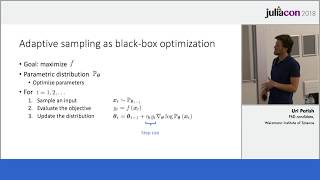

In Fall 2020 and Spring 2021, this was MIT's 18.337J/6.338J: Parallel Computing and Scientific Machine Learning course. You might not know all of the latest methods in differential equations, all of the best knobs to tweak, how to properly handle ... Lessons learned while achieving a 100x speedup of TrajectoryOptimization.jl by eliminating allocations. SIMD (Single Instruction, Multiple Data) is a term for when the processor executes the same operation (like addition) on multiple ... This talk was presented as part of JuliaCon2021 Abstract: Modern databases can choose between two approaches to evaluating ... In this video we make small changes to our N body simulation example to show various easy

This talk will present how basic operations on vectors, like summation and dot products, can be made more accurate with respect ... Highly parallelizable black box combinatorial ArrayAllocators.jl uses the standard array interface to allow faster `zeros` with `calloc`, allocation on specific NUMA nodes on ... Benchmarking is an essential activity in understanding the performance characteristics of an application. This video explains how ... In this series, we're gonna define our own Speaker: David P. Sanders (Faculty of Sciences, Universidad Nacional Autónoma de México, Mexico City, Mexico; RelationalAI) ...

![12. Optimisation Tips & Tricks [HPC in Julia]](https://i.ytimg.com/vi/UZzFabpkH8Y/mqdefault.jpg)