Media Summary: Deep learning is the compute model for this new era of In this episode of TensorFlow Meets, we are joined by Chris Gottbrath from In many applications of deep learning models, we would benefit from reduced latency (time taken for

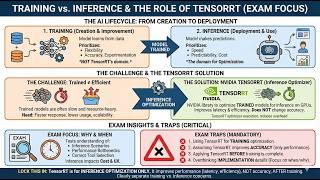

Nvidia Tensorrt Faster Ai Inference Tensorrt Nvidia Aiinference Llmoptimization - Detailed Analysis & Overview

Deep learning is the compute model for this new era of In this episode of TensorFlow Meets, we are joined by Chris Gottbrath from In many applications of deep learning models, we would benefit from reduced latency (time taken for Inside my school and program, I teach you my system to become an By the end of this lecture, you will be able to: Understand what Original Youtube video: MLOps Community: Maher is an engineering ...

Even the smallest of Large Language Models are compute intensive significantly affecting the cost of your Generative