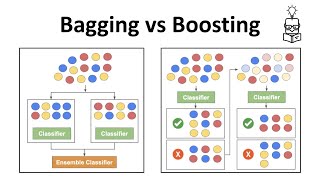

Media Summary: Ensemble Learning Techniques Voting Bagging Boosting Random Forest Stacking in This lecture talks about Holdout, Cross Validation ( K Fold Cross Validation ), Overfitting & Bootstrapping in Data Warehouse ... The statistical technique of "bagging", to reduce the variance of a classification or regression procedure. A playlist of these ...

Machine Learning 4 2 Bootstrapping - Detailed Analysis & Overview

Ensemble Learning Techniques Voting Bagging Boosting Random Forest Stacking in This lecture talks about Holdout, Cross Validation ( K Fold Cross Validation ), Overfitting & Bootstrapping in Data Warehouse ... The statistical technique of "bagging", to reduce the variance of a classification or regression procedure. A playlist of these ... This video is part of the Udacity course " Bagging, Boosting, and Stacking are three key ensemble methods in 📌 Welcome to Module 3 Part 4 of the Machine Learning series (KTU AMT305 – 2019 Scheme)! In this video, we explain the concept ...

K-Fold Cross Validation, Stratified K-Fold Cross Validation, Leave-one-out Cross Validation, and Leave-P-Out Cross-Validation in ... Like way so I will get how many estimates