Media Summary: This video lesson explores the power of Large Language Model Jason Fries, a research scientist at Snorkel AI and Stanford University, discussed the challenges of deploying LLMs and ... In this video, we sit down with Jonas Hübotter (ETH Zurich) and Idan Shenfeld (MIT) to break down self-

Llm Distillation Eng - Detailed Analysis & Overview

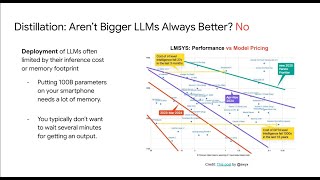

This video lesson explores the power of Large Language Model Jason Fries, a research scientist at Snorkel AI and Stanford University, discussed the challenges of deploying LLMs and ... In this video, we sit down with Jonas Hübotter (ETH Zurich) and Idan Shenfeld (MIT) to break down self- Paper found here: Code will be found here: Foundation model performance at a fraction of the cost- model Try Voice Writer - speak your thoughts and let AI handle the grammar: Four techniques to optimize the speed ...

In this video (Part 1 of our Fine-Tuning Series), we dive into

![How to Distill LLM? LLM Distilling [Explained] Step-by-Step using Python Hugging Face AutoTrain](https://i.ytimg.com/vi/tpMOF1cT4Fc/mqdefault.jpg)