Media Summary: Checkout the MASSIVELY UPGRADED 2nd Edition of my Book (with 1300+ pages of Dense Python Knowledge) Covering 350+ ... In this video I cover the AdamW optimizer in comparison with the classical Adam. Also, I underline the differences between Ridge Regression is a neat little way to ensure you don't overfit your training data - essentially, you are desensitizing your model ...

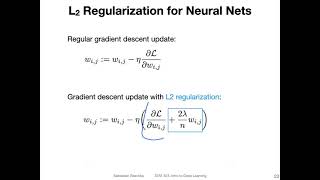

L2 Regularization In Deep Learning And Weight Decay - Detailed Analysis & Overview

Checkout the MASSIVELY UPGRADED 2nd Edition of my Book (with 1300+ pages of Dense Python Knowledge) Covering 350+ ... In this video I cover the AdamW optimizer in comparison with the classical Adam. Also, I underline the differences between Ridge Regression is a neat little way to ensure you don't overfit your training data - essentially, you are desensitizing your model ... For more information about Stanford's online Artificial Intelligence programs visit: This lecture covers: 1.