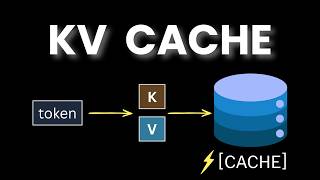

Media Summary: Try Voice Writer - speak your thoughts and let AI handle the grammar: The In this deep dive, we'll explain how every modern Large Language Model, from LLaMA to GPT-4, uses the Most devs are using LLMs daily but don't have a clue about some of the fundamentals. Understanding

Inside Llm Inference Gpus Kv Cache And Token Generation - Detailed Analysis & Overview

Try Voice Writer - speak your thoughts and let AI handle the grammar: The In this deep dive, we'll explain how every modern Large Language Model, from LLaMA to GPT-4, uses the Most devs are using LLMs daily but don't have a clue about some of the fundamentals. Understanding Same prompt. Same model. The first call costs $1.00. The second costs $0.05. Same words — 20× cheaper. The reason isn't a ... Open-source LLMs are great for conversational applications, but they can be difficult to scale in production and deliver latency ... In this video, we understand how VLLM works. We look at a prompt and understand what exactly happens to the prompt as it ...

Ever wonder how even the largest frontier LLMs are able to respond so quickly in conversations? In this short video, Harrison Chu ...