Media Summary: ... a mean to reduce channel depth we introduce Let's calculate how many parameters we actually save by applying a Ready to start your career in AI? Begin with this certificate → Learn more about watsonx ...

Iannwtf Lecture 6 Bottleneck Layers - Detailed Analysis & Overview

... a mean to reduce channel depth we introduce Let's calculate how many parameters we actually save by applying a Ready to start your career in AI? Begin with this certificate → Learn more about watsonx ... Stanford Winter Quarter 2016 class: CS231n: Convolutional Neural Networks for Visual Recognition. For more information about Stanford's online Artificial Intelligence programs visit: This Full paper is publicly available at: Notation: n = number of train samples ...

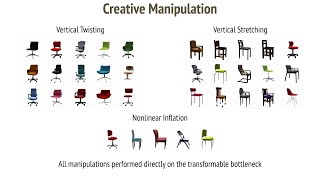

Supplementary video for our paper, "Transformable 2/7/20 Artemy Kolchinsky (Santa Fe Inst) Abstract: The information Learn more about watsonx: Neural networks reflect the behavior of the human brain, allowing computer ... So the idea behind an auto associator is you have an input Deep Features, Image Embedding, Saliency via Occlusion, Class Activation Maps (CAM), Grad-CAM, Feature Inversion, Neural ...

![[IANNwTF Lecture 6] Bottleneck Layers](https://i.ytimg.com/vi/IBu2oJ2-vCs/mqdefault.jpg)

![[IANNwTF Lecture 6] Bottleneck Parameters Calculation](https://i.ytimg.com/vi/kGWBjHEYhLs/mqdefault.jpg)