Media Summary: In this video, we take a look at multiple ways in which NVIDA What is CUDA? And how does parallel computing on the I explain the ending of exponential computing power growth and the rise of application-specific hardware like

How Nvidia Gpus Accelerate Your Python Workflow - Detailed Analysis & Overview

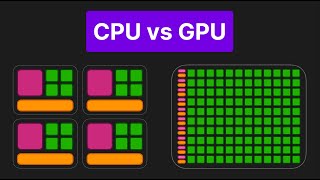

In this video, we take a look at multiple ways in which NVIDA What is CUDA? And how does parallel computing on the I explain the ending of exponential computing power growth and the rise of application-specific hardware like 00:00 Start of Video 00:16 End of Moore's Law 01: 15 What is a TPU and ASIC 02:25 How a See newer version of video here: To learn more, visit the blog post at Why does a CPU perform the calculation 1 + 1

We introduce RAPIDS, a suite of open source libraries that allow users to quickly integrate