Media Summary: Lex Fridman Podcast full episode: Please support this podcast by checking out ... Welcome to the Lecture on Adversarial Examples or Attacks in Explainable AI (XAI). You might have seen cases where CNNs ... This is a reading group talk on the published paper in CVPR 2016 entitled, "

Fooling Neural Networks 1000x Faster - Detailed Analysis & Overview

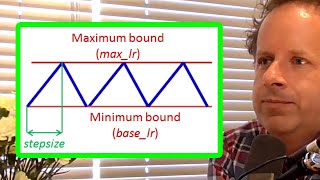

Lex Fridman Podcast full episode: Please support this podcast by checking out ... Welcome to the Lecture on Adversarial Examples or Attacks in Explainable AI (XAI). You might have seen cases where CNNs ... This is a reading group talk on the published paper in CVPR 2016 entitled, " This is a clip from a conversation with Jeremy Howard from Aug 2019. New full episodes every Mon & Thu and 1-2 new clips or a ... 0:00 Multi-GPU Training 2:15 Cyclic Learning Rate Schedules 3:07 Mixup: Beyond Empirical Risk Minimization 3:44 Label ... Hello and welcome, Me & my partner implemented an adversarial attack called Basic Iterative Method (BIM) on Inception v3, ...

A video summary of the paper: Nguyen A, Yosinski J, Clune J. Deep Fooling Neural Network Interpretations via Adversarial Model Manipulation PyTorch is a deep learning framework for used to build artificial intelligence software with Python. Learn how to build a basic ... Synthetic Gradients were introduced in 2016 by Max Jaderberg and other researchers at DeepMind. They are designed to replace ... CPUs are often bottlenecks in Machine Learning pipelines. Data fetching, loading, preprocessing and augmentation can be slow ...