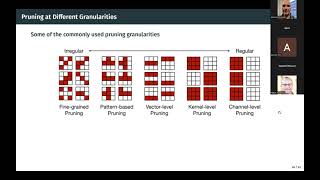

Media Summary: For many applications, when transfer learning is used to retrain an image classification network for a new task, or when a new ... Try Voice Writer - speak your thoughts and let AI handle the grammar: Four techniques to optimize the speed ... tl;dr: This lecture covers various effective model compression techniques such as

Data Free Parameter Pruning And Quantization - Detailed Analysis & Overview

For many applications, when transfer learning is used to retrain an image classification network for a new task, or when a new ... Try Voice Writer - speak your thoughts and let AI handle the grammar: Four techniques to optimize the speed ... tl;dr: This lecture covers various effective model compression techniques such as Are you planning to deploy a deep learning model on any edge device (microcontrollers, cell phone or wearable device)? Neural Networks and neural network based architecturres are powerful models that can deal with abstract problems but they are ... This video is a recording of the second session from our TinyML seminar at Mälardalen University (MDU), focused on model ...

This Tech Talk explores how to compress neural network models so they can run efficiently on embedded systems without ... Neural networks (NN) are very potent at solving many problems in computer vision, time series analysis, etc. But the ... One approach that popularized this uh method is the AWQ activation awarded Video Description Tired of slow, expensive AI models? It's time to shrink them down. In this video, Treecapital AI pulls back ... Class in the course Advanced Machine Learning with Neural Networks 2021 (TIF360 at CTH and FYM360 at GU) held on 27 April ... In this video I will introduce and explain

Authors: Matan Haroush, Itay Hubara, Elad Hoffer, Daniel Soudry Description: Background: Recently, an extensive amount of ... In this video, we demonstrate the deep learning Presentation for 11-785 final project on: Learning Highly Sparse Deep Neural Networks through