Media Summary: Subscribe to PythonCodeCamp, or I'll eat all your cookies ! In this session of Computer Vision Study Group, Johannes walks us through the paper This video is a tutorial on how to get started with

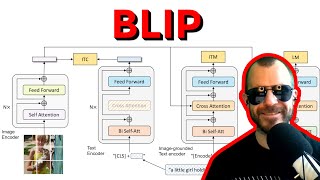

Blip2 Image Captioning - Detailed Analysis & Overview

Subscribe to PythonCodeCamp, or I'll eat all your cookies ! In this session of Computer Vision Study Group, Johannes walks us through the paper This video is a tutorial on how to get started with Book a meeting: In this video we will build a python script that will allow us to ... such as image-text retrieval (+2.7% in average recall), This is a step by step demo of installing and running locally salesforce blip

... cow let's use eight lines of python code to find out first import the Right Packages then choose what AI In this tutorial, we will demonstrate how to use a Visual Language Models named " The cost of vision-and-language pre-training has become increasingly prohibitive due to end-to-end training of large-scale ... ... scripts from here ⤵️ SOTA (The Very Best) BLIP is a new VLP framework that transfers flexibly to vision-language understanding and generation tasks. BLIP effectively ... Welcome to Technical Arhan Mansoori In this video, I'll walk you through using BLIP (Bootstrapped Language-

In today's tutorial, we are showing you how to create a fully-automated process for generating