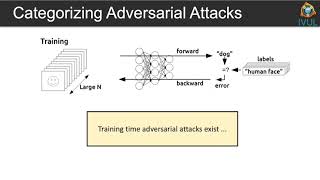

Media Summary: Are your Image Classification models actually secure? In this video, we dive deep into In this video, I describe what the gradient with respect to input is. I also implement two specific examples of how one can use it: ... So um today we're gonna be uh presenting this paper um uh uh towards deep learning models resistant to

Adversarial Robustness Tutorial Fgsm Vs Pgd Attacks In Pytorch Hands On Code - Detailed Analysis & Overview

Are your Image Classification models actually secure? In this video, we dive deep into In this video, I describe what the gradient with respect to input is. I also implement two specific examples of how one can use it: ... So um today we're gonna be uh presenting this paper um uh uh towards deep learning models resistant to This video is part of the Introduction to ML Safety course ( and was recorded by Dan Hendrycks at the ... For more information about Stanford's Artificial Intelligence professional and graduate programs, visit: October ... We will go over what is the difference between

Discover how NVIDIA is leading the charge in optimizing

![[Attack AI in 5 mins] Adversarial ML #1. FGSM](https://i.ytimg.com/vi/4TseynD_v7M/mqdefault.jpg)