Media Summary: ... an integer value that's where the second leg of ... Quantization, Quantization Range, Quantization Granularity, Dynamic and Static Quantization, ... presents the “Introduction to Shrinking Models with Quantization-aware Training and

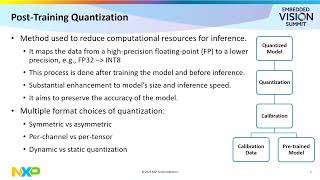

8 2 Post Training Quantization - Detailed Analysis & Overview

... an integer value that's where the second leg of ... Quantization, Quantization Range, Quantization Granularity, Dynamic and Static Quantization, ... presents the “Introduction to Shrinking Models with Quantization-aware Training and Hi we are group 11 and we are going to present our project which is on SmoothQuant - Accurate and Efficient Post-Training Quantization for Large Language Models GGUF quantization is currently the most popular tool for

Try Voice Writer - speak your thoughts and let AI handle the grammar: Four techniques to optimize the speed ... Run massive AI models on your laptop! Learn the secrets of LLM Post-Training Quantization on Diffusion Models (CVPR 2023) Large language models (LLMs) show excellent performance but are compute- and memory-intensive. This talk was given at a compression study group as below: