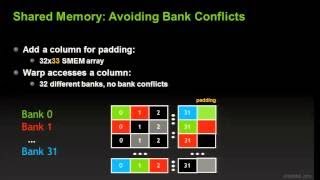

Media Summary: This video tutorial has been taken from Learning This video is part of an online course, Intro to Parallel Programming. Check out the course here: ... You get to learn how to reduce global memory access by storing frequently used data in

02 Cuda Shared Memory - Detailed Analysis & Overview

This video tutorial has been taken from Learning This video is part of an online course, Intro to Parallel Programming. Check out the course here: ... You get to learn how to reduce global memory access by storing frequently used data in Programming for GPUs Course: Introduction to OpenACC 2.0 vesves In this video we write a histogram kernel from scratch that uses In this video, we take a deep dive into a reduction kernel in

Instructor - Prof. Wen-mei Hwu Playlist - Wow, this has been a tricky tute. I originally tried to cover much more and added some coding at the end but it was too long to be ... Tiled (general) Matrix Multiplication from scratch in NVidia GPUs offer access to a dedicated L1 cache called "